The second version of the robot model will be checked by the Technical Committee to ensure all requirements are met and feedback will be sent to teams by May 31st. They are then given time to implement the feedback and change the model until May 23rd. Teams receive feedback on the robot model both from the two teams peer-reviewing their model as well as from the Technical Committee on May 7th.Teams have until May 3rd to provide the result of the peer-review process via the submission system. All teams submitting a robot model will then be assigned to two other teams to peer-review the robot model provided by the other team. An initial model needs to be submitted via the only submission system by April 23rd.The submission process consists of two steps: To ensure that the robot models created by the individual teams do not violate the requirements set by the Technical Committee, all robot models need to be submitted ahead of time and respect the robot model specifications. However, they may choose to play with the robot of another team or the sample robot model. Teams are expected to provide their own robot models to compete with. Robot Model & Control SoftwareĪ sample robot model is provided in the Webots repository. From now on, only bug fixes are expected in the simulator. The final version of the AutoRef software has been published on May 19th.

Webots soccer players software#

In the latter case, the team showing unsportive behavior may receive a yellow card and need to change their software to prevent the unsportive behavior seen in the game. In case of a complaint, the Technical Committee will review the game and either decide that the game result is valid, or that the game needs to be replayed.

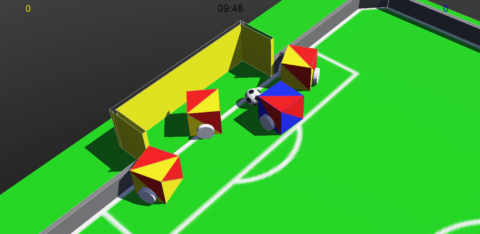

Complaints need to be based on serious violations of fair behavior repeatedly performed by the opponent team during the game. After the simulation was conducted and the resulting video streamed, teams have half an hour to virtually sign the game result or complain about the result to the Technical Committee. The decisions made by the AutoRef software are final, even if they may, at times, be faulty. The Humanoid League will provide an automatic refereeing system to enforce the laws of the game. The visuals of the game environment can be considered final and will only be updated if absolutely necessary. The official game environment including a Darwin OP robot model can be found in the Webots repository. The latest version of the rules has been published on April 30th, no major changes are expected for the competition. The changes marked are in comparison to the 2020 version of the Humanoid Soccer Competition Laws of the Game. The current draft of the rules can be found here: The laws of the game have been adapted from the Humanoid Soccer Competition Laws of the Game 2020. Teams need to provide a robot model to compete with, as well as the robot control software. The latest version of the competition setup, including the final virtual environment, can be found in the official RoboCup-Humanoid-TC repository.

Webots soccer players simulator#

The open-source simulator Webots is used for the Virtual Humanoid Soccer competition. Server Infrastructure Specifications: here.Robot Controller API Documentation: here.Webots Repository with the latest simulator updates for the league: here.Our evaluations and experimental results show that the proposed method outperforms the entropy-based methods in the RoboCup context, in cases with high self-localisation errors.This website will give an overview of the technical details for the Virtual Humanoid Soccer competition. We implemented the proposed method on a humanoid robot simulated in Webots simulator. The model shows a very competitive rate of 80% success rate in achieving the best viewpoint. The proposed network only requires the raw images of the camera to move the robot's head toward the best viewpoint. However, in this research, we formulate the problem as an episodic reinforcement learning problem and employ a Deep Q-learning method to solve it. To deal with an active vision problem, several probabilistic entropy-based approaches have previously been proposed which are highly dependent on the accuracy of the self-localisation model. Active vision is critical for humanoid decision-maker robots with a limited field of view. The proposed method adaptively optimises the viewpoint of the robot to acquire the most useful landmarks for self-localisation while keeping the ball into its viewpoint. In this paper, we present an active vision method using a deep reinforcement learning approach for a humanoid soccer-playing robot.